News

Latest News

Ad Spending on Digital Video Expected To Grow 16% in 2024

By Jon Lafayette published

IAB forecast calls for CTV to jump 12% to $22.7 billion

Comcast Earnings Flat as Video, Broadband Subscriber Losses Continue

By Jon Lafayette published

Peacock adds 3 million subscribers, cuts losses

TelevisaUnivision Q1 Loss Widens but DTC Business Expected To Turn Profit in Second Half

By Jon Lafayette published

U.S. advertising sales edge up

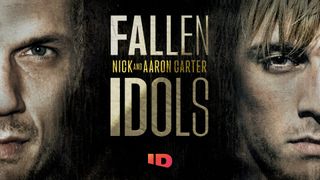

Nick Carter, Aaron Carter Subject of New Investigation Discovery Docuseries

By R. Thomas Umstead published

Four-part series to delve into the lives of the two troubled stars

Attend 'The Business of TV News' Event in D.C. on May 2

By Michael Malone published

Martha Raddatz, Shannon Bream, Glenn Kirschner set to deliver keynotes

MTV Moving VMAs To New UBS Arena in New York

By Jon Lafayette published

Event will air live worldwide on September 10

Weekly Cable Ratings: Fox News Channel Outpaces NBA, NHL Postseason

By R. Thomas Umstead published

News network wins in primetime, total day as playoffs propel ESPN, TNT into primetime top 3

Jo Ann Ross Steps Down as Ad Sales Chairman at Paramount

By Jon Lafayette published

Move comes weeks before upfront

Multichannel Newsletter

The smarter way to stay on top of the multichannel video marketplace. Sign up below.